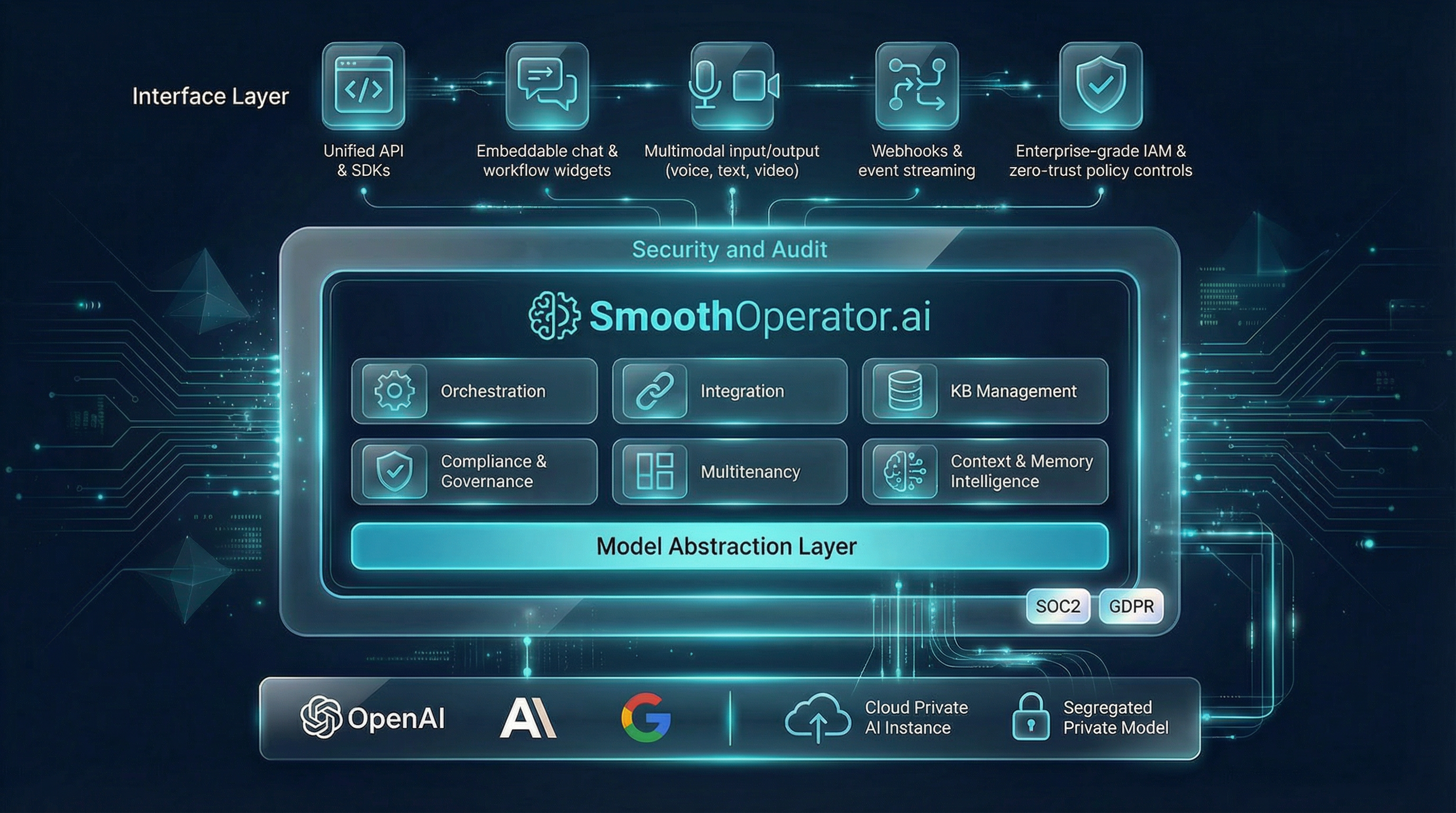

The Private Enterprise AI Architecture

A full-stack agent platform deployed inside your environment—on your infrastructure, with your models, under your control. The complete enterprise agent stack: private context, multi-agent execution, governance controls, and open integration, without centralizing your institutional memory in a vendor’s cloud.

Design Architecture

Structural components and integration layers

Two Execution Modes, One Coherent Platform

The platform supports two complementary execution modes that intelligently collaborate. Orchestrated multi-agent teams handle complex, multi-step reasoning; specialized vertical agents deliver sub-second responses for high-volume domain queries. Both run privately inside your environment.

Orchestrated Multi-Agent

A generalized system for complex, multi-step reasoning tasks. It employs a coordinated team of agents.

When a query requires deep analysis, the orchestration engine activates a structured reasoning pipeline. The system first decomposes complex questions into manageable sub-tasks, then systematically gathers information from across your knowledge base. Each piece of evidence is cross-referenced and validated before being synthesized into a comprehensive response. This deliberate, multi-phase approach ensures that answers to nuanced questions are thorough, accurate, and fully substantiated.

Specialized Vertical Agents

Highly-optimized agents tuned for specific domain tasks. Ideal for high-volume, low-latency workflows.

For well-defined, repetitive queries within a specific domain, specialized agents deliver instant responses without the overhead of full orchestration. These agents are pre-configured with domain expertise—whether HR policies, legal clauses, technical documentation, or financial procedures—and can retrieve and format answers in milliseconds. They excel at high-throughput scenarios where consistency and speed matter more than exploratory reasoning.

Intelligent Interoperability

These two modes are not isolated—they are designed to work together. A multi-agent team can invoke specialized single agents when it needs rapid, domain-specific answers during complex reasoning. Conversely, a specialized agent can escalate to the full orchestration pipeline when it encounters a query that exceeds its scope. This bidirectional collaboration ensures you always get the optimal balance of speed and depth.

Complex reasoning, multi-step analysis, cross-document synthesis

Instant domain answers, high-throughput, millisecond latency

Multi-Agent → Single Agent

During complex analysis, the orchestration team may need a quick policy lookup or terminology check. Instead of spinning up full reasoning, it calls a specialized agent for instant retrieval, then continues its synthesis with verified facts.

Single Agent → Multi-Agent

When a specialized agent receives a query beyond its domain—requiring cross-functional analysis or multi-document reasoning—it automatically escalates to the orchestration pipeline, ensuring the user gets a comprehensive answer without manual intervention.

Single-Model Chatbot vs. Multi-Agent Orchestration

| Capability | Single-Model Chatbot | SmoothOperator.ai Multi-Agent |

|---|---|---|

| Complex Multi-Step Reasoning | ||

| Built-in Verification & Fact-Checking | ||

| Citation-Backed Responses | ||

| Sub-Second Domain Queries | ||

| Cross-Document Synthesis | ||

| Adaptive Mode Selection | ||

| Audit Trail & Observability | ||

| Enterprise RBAC & Multitenancy |

Enterprise-Grade Design Principles

Every architectural decision is driven by the requirements of enterprise deployment: security, reliability, flexibility, and governance at scale.

Vendor Independence via Model Abstraction Layer

The Model Abstraction Layer decouples your enterprise workflows from any single AI provider. This architectural decision ensures you are never locked into one vendor's pricing, capabilities, or roadmap. As new models emerge or existing ones improve, you can seamlessly swap providers without rewriting integrations or retraining staff. This flexibility protects your investment and keeps you at the frontier of AI capability.

Reliable Agentic Workflow Orchestration

Our orchestration engine coordinates multiple specialized AI agents working in concert to solve complex, multi-step problems. Unlike single-model approaches that struggle with nuanced tasks, our multi-agent pipeline assigns the right agent to each sub-task—planning, research, analysis, verification—ensuring higher accuracy and more reliable outputs. Built-in retry logic, fallback paths, and graceful degradation guarantee that workflows complete even when individual components encounter issues.

Live Monitoring and Evaluation

Every agent action, every query, every response is logged and observable in real-time. Our monitoring dashboard provides complete visibility into system behavior, latency metrics, token usage, and quality scores. Automated evaluation pipelines continuously assess response accuracy against ground truth, flagging drift or degradation before it impacts users. This observability is essential for maintaining trust in AI-assisted decisions at enterprise scale.

Private RBAC Tenants

Enterprise deployments demand strict data isolation. Our multi-tenant architecture ensures that each business unit, department, or client operates in a logically isolated environment with its own knowledge base, access controls, and audit trails. Role-Based Access Control (RBAC) governs who can query, who can administer, and who can view sensitive outputs. Data never crosses tenant boundaries, satisfying even the most stringent compliance requirements.

Open Integration with Domain Knowledge Providers

The platform connects to your existing knowledge ecosystem—SharePoint, Confluence, Google Drive, Salesforce, ServiceNow, and 200+ other enterprise systems. Our connector framework supports both push and pull ingestion patterns, real-time sync, and incremental updates. Domain-specific knowledge providers can be plugged in to enrich responses with industry terminology, regulatory context, or proprietary datasets without exposing raw data to external models.

Hybrid/Air-Gapped Option for Strict Governance

For organizations with the most demanding security postures—financial institutions, defense contractors, healthcare providers—we offer fully air-gapped deployments. Run the entire platform on your own infrastructure, using your own private models, with zero data egress to external networks. This hybrid architecture supports mixed deployments where some workloads use cloud AI while sensitive operations remain entirely on-premises.

Why This Architecture Matters

Core Capabilities

Advanced reasoning that goes beyond keyword matching. Our agents understand context, infer intent, and synthesize information from multiple sources to deliver accurate, nuanced responses.

Combines vector similarity search with traditional keyword matching for optimal recall and precision. Multi-level chunking preserves document structure and context across complex enterprise documents.

Native parsing of PDFs, Word documents, spreadsheets, and technical diagrams. Preserves tables, formatting, and visual elements that other systems lose during ingestion.

Open Integration Standards

Connect to your existing stack via MCP, REST, webhooks, and native SDKs — no vendor lock-in, no proprietary connectors

What is Multi-Agent AI Orchestration?

Multi-Agent AI Orchestration is an advanced approach to enterprise AI where multiple specialized AI agents work together in a coordinated pipeline to solve complex problems. Unlike single-model chatbots that attempt to handle everything with one general-purpose AI, multi-agent systems assign different stages of a task to purpose-built agents—each optimized for a specific function.

In practice, this means one agent might handle task decomposition and planning, another focuses on information retrieval and research, a third performs analysis and synthesis, and a final agent handles verification and quality assurance. The orchestration layer coordinates these agents, manages data flow between them, and ensures the final output meets enterprise quality standards.

Answer

Each stage is handled by a specialized agent optimized for that specific function

Why It Matters

- • Higher accuracy through specialized agents

- • Built-in verification reduces hallucinations

- • Transparent reasoning with audit trails

- • Scalable complexity for enterprise workflows

How SmoothOperator.ai Implements It

- • Pluggable Workflow Registry for custom pipelines

- • Dual retrieval lanes for comprehensive search

- • Evidence ledger for citation tracking

- • Human-in-the-loop pause and review points

Spec-Driven Workflows

Declarative Composition, Not Code. Define agent behaviors in YAML, let the Meta-Builder translate natural language into executable specs.

Meta-Builder Concept

Users describe workflows in natural language. The Meta-Agent interprets intent and generates declarative YAML specs. The execution engine reads specs and orchestrates agents—no custom code required.

Benefits

- Version control for agent behaviors

- No code changes for workflow updates

- Portable across environments

Unified, Layered Memory

Hybrid search with explicit lanes. Each lane has different persistence and retrieval characteristics.

Verbatim

Session-scoped context, cleared after conversation ends

Indexed Private

Your enterprise documents, permanently indexed and searchable

Web

Real-time web search results, cached briefly for efficiency

Evidence Memory

Committed evidence from past runs, continuously enriching

Provider Abstraction

From Local GPU to Hyperscaler

"Switching models is a policy change, not a code change."

BYOK Support

Bring Your Own Key—use your existing provider contracts and billing

Hot Swapping

Change providers without downtime or code deployment

Cost Optimization

Route queries to the most cost-effective model for each task

Evidence-First Outputs

Trust Through Traceability. Every response includes committed evidence, validation gates, and explicit correction or refusal.

The Evidence Contract

- Evidence committed during run

- Citations reference committed sources only

- Exports assembled from evidence

Enforcement

Validation gates check every output against committed evidence. If claims can't be verified, the system provides explicit correction or refusal—never hallucinated answers.

Full MCP Support

First-Class Citizen in the Open Ecosystem. Unified Tool Lattice with MCP tool outputs as evidence.

Unified Tool Lattice

Native tools, MCP tools, and platform tools all accessible through a single interface

MCP Tool Outputs as Evidence

Results from MCP tools are automatically committed to the evidence bundle for full traceability

Isolated & Attributable Runs

Every tool invocation is logged with full context, enabling complete audit trails

Embeddable & Multi-Tenant

Single execution plane with strict tenant isolation. Your app and operator cockpit share the same infrastructure.

Your App

Customer-facing interface

Operator Cockpit

Admin dashboard

Single Execution Plane

Shared infrastructure

Strict Tenant Isolation

Data never crosses tenant boundaries. Each tenant has isolated memory, audit logs, and access controls.

Same Execution Plane

Unified monitoring, logging, and observability across all tenants without compromising isolation.

Developer First API

Integrate SmoothOperator.ai into your existing internal tools, Slack bots, or customer portals with our REST API.